Your AI Is Only as Smart as the Instructions You Give It

Why prompt engineering is the most underestimated layer in your AI stack — and what to do about it

Most executives evaluating AI today are asking the wrong question. They want to know which model is best — Claude, GPT-4, Gemini, as if the right answer is a procurement decision. It isn't. The model is the least interesting part of the problem.

The real question is: what sits between that model and your business outcomes? Because left to its own devices, a large language model is extraordinarily capable and almost entirely useless. It needs direction. It needs structure. It needs what the industry calls prompt engineering, and most organizations are either ignoring it entirely or treating it as a footnote to their AI strategy.

That's a mistake with measurable consequences.

What the Model Actually Does

A large language model is, at its core, a very sophisticated pattern-completion engine. It predicts the most statistically likely next token based on everything it was trained on and everything you give it as input. It has no memory between sessions (although that’s changing and I'll discuss persistence of context in a subsequent article), no understanding of your business context, no awareness of your customers, and no inherent goal beyond producing a plausible continuation of the text you started.

This is not a criticism. It's a design reality and understanding it is the prerequisite for using AI effectively. When you simply open a chat interface and type a question, you're asking the model to infer everything it doesn't know: your industry, your standards, your audience, your intent. Sometimes it gets close. More often, it produces something generic, inconsistent, or confidently wrong.

Standard chat is exploration. It's valuable for individuals learning, brainstorming, or getting quick answers. But it is not a production capability. If your AI strategy consists primarily of employees using chat interfaces, you haven't built an AI capability, you've bought a subscription.

"If your AI strategy consists primarily of employees using chat interfaces, you haven't built an AI capability — you've bought a subscription."

The Layer That Changes Everything

Prompt engineering is the practice of deliberately crafting the inputs to an AI model to produce reliable, high-quality, repeatable outputs. It is the difference between asking a question and designing an instruction. Done well, it transforms a general-purpose model into something that behaves like a specialist trained specifically for your context.

The techniques are not magic. They include giving the model a specific role and context before asking it anything (system prompts), showing it examples of the exact output format you need (few-shot prompting), instructing it to reason through a problem before answering (chain-of-thought), and injecting relevant business data at runtime so responses reflect your actual situation rather than generic training data (retrieval-augmented generation, or RAG).

None of this requires a PhD in machine learning. It requires discipline, iteration, and a clear understanding of what outcome you need. The organizations seeing real ROI from AI are the ones who have invested in this layer. They treat prompt design with the same rigor they apply to software development or process design.

The balance that matters most in prompt engineering is between specificity and flexibility. Too specific and the system becomes brittle. In statistics this is known as “overfitting”. It works perfectly for the exact scenario you designed it for and fails gracefully on anything adjacent. You've essentially hard-coded assumptions about the input, and the moment reality doesn't match those assumptions, quality drops. Too flexible and you've given the model too little direction. It fills the ambiguity with its own judgment, which may or may not align with what you need. Outputs become inconsistent. Different runs produce different results. You're back to the unpredictability of raw chat.

Finding the right point between those extremes requires iteration. You design a prompt, test it against a range of realistic inputs, including edge cases and unusual scenarios, watch where it breaks, and tighten or loosen accordingly. It's genuinely empirical work, more like tuning an instrument than writing a specification.

There are a few secondary balances that fall out of that central one. One balancing act is between instruction and example. Sometimes telling the model what to do is less effective than showing it a few examples of what good looks like, and the right ratio depends on the complexity of the task. Another balance is between constraint and creativity. Tighter constraints produce more consistent outputs but can make responses feel mechanical, which matters if tone and voice are part of the deliverable. And another is between context richness and token efficiency — more context generally produces better results, but every token costs money and adds latency, so at production scale you're constantly trimming what's unnecessary.

The underlying skill is really knowing your model well enough to anticipate how it will interpret ambiguous instructions, which is why the manual experimentation phase can't be skipped. You're not just writing instructions, you're developing a mental model of how the AI thinks, and calibrating accordingly.

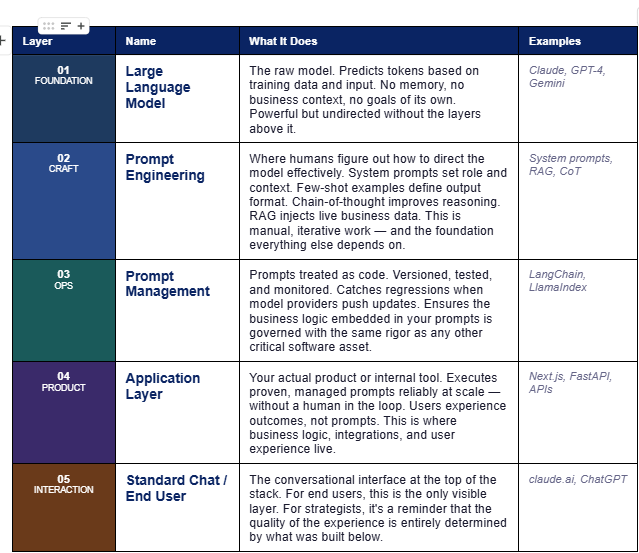

The Five-Layer AI Stack: A Framework for Executives

To make this concrete, it helps to think about enterprise AI architecture as a five-layer stack. Each layer builds on the one below it. Weakness at any layer limits what's possible above it. Here is how those layers work, from the ground up.

The practical implication of this framework is straightforward: most AI investments that underperform are failing at Layer 02 or Layer 03, not Layer 01. They chose a capable model, skipped the craft and operational discipline, and built their product on an unstable foundation. The outputs are inconsistent, the system is fragile, and nobody can explain why.

Investing in the model is necessary but not sufficient. The stack above it is where competitive differentiation actually lives.

This Is an Engineering Problem, Not Just a UX Problem

Here is where most AI implementations stall. A team spends weeks finding the right prompts, gets them working, and then embeds them directly into an application with no versioning, no testing, and no monitoring. Six months later, the model provider releases an update. Behavior changes subtly. Outputs degrade. Nobody knows why.

This is why prompt management (treating prompts as code) is not optional infrastructure. It's an operational discipline. Your prompts need version control so you know what changed and when. They need automated testing so you catch regressions before your customers do. They need monitoring in production so you can detect drift when a model update changes behavior downstream.

Tools like LangChain and LlamaIndex have built parts of this infrastructure. But the mindset shift matters more than the tooling. Prompts are business logic. They encode your standards, your voice, your rules. They deserve to be governed accordingly.

Configurability: The Strategic Differentiator

The most sophisticated question in enterprise AI architecture right now is not which model to use; it's how configurable your AI system should be, and at what level.

Well-designed AI systems expose configurability at multiple layers. End users might adjust tone, format, or domain focus through a simple interface, choosing from options you've already engineered. Administrators or operators can tune deeper behaviors: which knowledge base to search, which workflow to trigger, how conservative or creative the model should be. Developers have full access to the underlying prompt templates and orchestration logic.

This architecture matters for two reasons. First, it creates durability. A hardcoded AI system becomes a liability the moment your business changes. A configurable one adapts without requiring an engineering engagement every time. Second, it creates leverage. You build the framework once, establish the guardrails, and let the business configure within them. That is how AI scales across an organization without becoming a maintenance burden.

"A hardcoded AI system becomes a liability the moment your business changes. A configurable one adapts without requiring an engineering engagement every time."

What This Means for Executive Decision-Making

If you are evaluating or overseeing AI initiatives in your organization, the questions worth asking are not about which model scored best on a benchmark. They are about the layers above the model.

Are your prompts versioned and tested? If not, you have undocumented business logic sitting in plain text files that nobody owns. Do your AI outputs degrade silently when vendors push model updates? If you don't know, you probably don't have monitoring in place. Is your AI system configurable enough that the business can adapt it, or does every change require a developer? If the latter, your AI has a scaling problem built into its architecture.

These are infrastructure questions, not research questions. The technology to answer them well exists today. What's missing in most organizations is the architectural discipline to ask them in the first place.

The Bottom Line

The gap between AI that impresses in a demo and AI that delivers in production is almost never the model. It's everything between the model and the outcome: the quality of the instructions, the rigor of the engineering, the discipline of the operations, and the thoughtfulness of the architecture.

Prompt engineering is not a technical curiosity. It is the foundational competency of production AI,. The organizations implementing it properly will recognize an actual return on their AI investment rather than the generation of “lots of words”.

The model doesn't know your business. That's your job to articulate.

Tom Ackerman is the founder of Ackerman Strategic Advisory, specializing in enterprise AI architecture and technology transformation.